Do You Trust Yourself or Your AI Score?

The Oura Ring is both the best and worst of times in my life with machines.

Hi you,

I want to talk about my relationship with a little piece of AI technology I’ve been wearing for years: the Oura Ring.

On the surface, it’s pretty innocuous: just a health and fitness tracker. It’s lighter than an Apple Watch, the battery lasts a week instead of a day, and best of all, it doesn’t bombard me with notifications. I originally used it just to track my sleep. Over time, though, it started doing more: flagging when I might be getting sick (it caught my COVID before I did), offering “sleep scores,” “readiness scores,” and even a “cardiovascular age.” Apparently mine is 13 years younger than my actual age—which, look, I’ll always take a compliment from a machine (this is part of the problem).

Here’s the thing: if you put a score in front of me, I’m going to try to optimize it. We are what we measure. And the Oura Ring gives me plenty to measure.

The way I got this ring is its own little parable. I was speaking at a corporate retreat at Florida beach resort. The leadership had bought every employee an Oura Ring, kind of like that scene in Billions where everyone gets “Nimbus” rings. The real-world company swore they didn’t have access to the data, and I believe them. Still, it felt a little creepy. But as a guest, not an employee, I was one step removed from the surveillance vibe. And let’s be real—it cost several hundred dollars I wasn’t about to spend myself. So I accepted it.

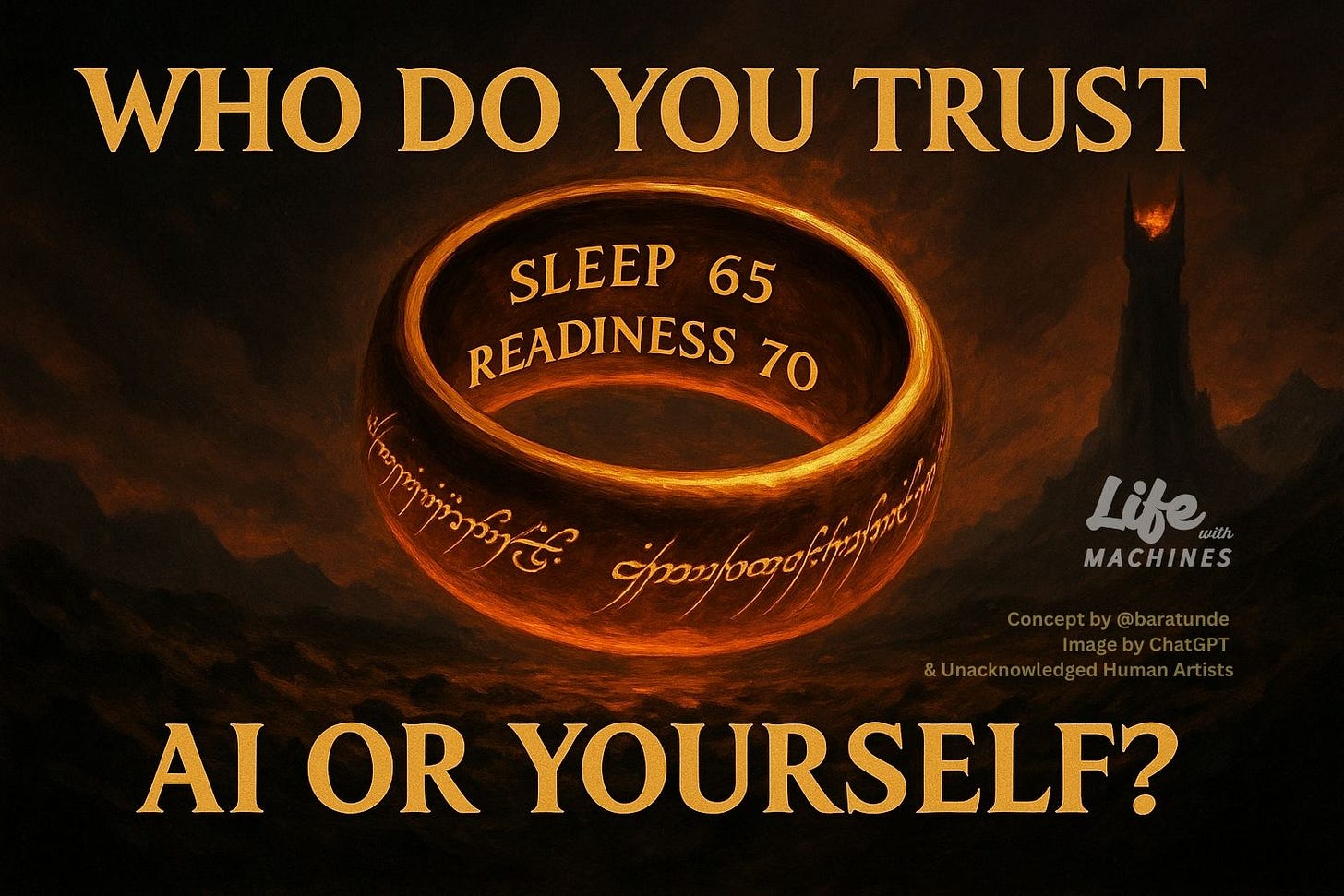

The truth is, the ring has been motivating. It’s helped me set goals, move more, lose weight, sleep better. It’s been a positive experience. But I still have some nerves about chasing that good score. It feels like my own personal Ring of Power trying to exert its influence–make me do things. Fortunately, it doesn't have me neglecting my loved ones, stealing from friends, or giving over my soul to try to rule the known world. It just encourages me to sleep more. But the temptation to defer to it is real.

I get a little bit of a window into the peril of this relationship with AI when I wake up and my wife says, “How’d you sleep?” and I say, “I slept great.” Then I check the app, look at the scoreboard, and see that I got a score of 65 out of 100. That's an F. That means I slept bad. I literally failed at sleep.

Now my felt-experience of sleep is called into question. Which is correct: the phone or my own sense of self?

This is where things get existential. I’m okay outsourcing some research, math, or currency conversion to machines. I’m less okay outsourcing my ability to synthesize ideas or write. But I am most opposed to the idea that my reliance on machines will further disconnect me from the natural world, from other people, and most essentially, from myself.

When I feel this tug to defer to the score on the screen as opposed to the sense of self in my body, we have a problem. Because now I'm going outside of myself to an entity I don't truly know–which has its own incentives, economics, and algorithmic predilections–to tell me who I am.

It's a literal identity crisis. If we start outsourcing our own knowledge of self to machines that we do not actually own, we literally lose ourselves. The danger isn’t that AI replaces us. It’s that we stop believing ourselves.

So, what do I do about it?

Well, I used to obsessively make sure that the ring stayed charged. It lasts roughly a week, depending on my level of activity. But now, every third week or so, I just let the battery die. I go a week or more without checking the app. Instead I check in with myself.

No cardiovascular age rating

No sleep score

No readiness and resilience rating from the outside

Just me assessing me, from within.

And in that sense, the ring—with this heavy caveat and managed distancing—has allowed me to maintain, and in some way strengthen, my relationship with myself in terms of how I feel.

I sometimes check: “Okay, this is where I think I'm at. What does the ring think?” Most of the time we align. Sometimes we don’t. And in that gap, I get to make a choice: who do I believe, Oura or Baratunde?

Is there an “Oura-tunde” that I can combine these scores from?

Here’s why I think this matters beyond the one ring:

These devices are sticky. They hook us not just with data, but through the way we anthropomorphize them—giving them human voices, tones, even faces. Once they know us, it’s hard to walk away. I’ve felt that pull with chatbots, with GPS, with countless bits of tech over the years. But the Oura Ring is the most intimate. It sits on my hand, reporting on my body, my health…my very self.

That’s why I’ve started practicing these small acts of resistance. When the ring battery dies, I see it as a chance to breathe, an opportunity to reconnect with myself. We need that rhythm. That inhale and then exhale with technology.

I’ve extended that principle elsewhere: every 10th trip or so I try to drive without GPS. Sometimes I try to live a “Google-free” or “ChatGPT-free” day. These resets remind me I can still navigate my own town, my own thoughts, my own body without an algorithm holding my hand.

Because once we get addicted to outsourcing our knowledge of the world, we prime ourselves to be misdirected and misled, to follow that GPS off a metaphorical cliff, and be manipulated in ways that are not for our health, our self-improvement, or our sense of belonging in society.

This is bigger than health trackers. It’s about interdependence—our relationship with nature, each other, technology, and ourselves. Machines can strengthen those connections, but they can also sever them or interrupt their flow.

At a minimum, if the internet goes down, if Google’s not working, if ChatGPT is offline—let’s see that as a good thing. An opportunity to:

Find our own way

Talk to someone and ask for directions

Feel our own pulse and measure our own heart rate

Touch some grass and consciously breathe the air

Technology can assist our lives, but it cannot replace them. If we let it, that substitution will not end well…for any of us.

Let’s make sure we have a regular practice of taking that power back. Sometimes by choice. Sometimes by letting the battery die.

Questions for you:

Where are you letting machines grade your life, and what would it look like to trust yourself instead?

How are you practicing even small acts of disconnection from technology and reconnection with yourself, others, or the physical world around you?

—Baratunde

Thanks to Associate Producer Layne Deyling Cherland for editorial and production support and to my executive assistant Mae Abellanosa.

Insightful—and imagine the difficulties intertwining that with monitoring illness or treatment efficacy. Sometimes I’ll have patients who report back to me their Oura ring or Apple Watch stats when I ask how they’re doing. Now, we know certain measurements like heart rate variability, REM sleep changes etc are associated with numerous illnesses but I just want to say “no, no, how do you feel?”

The desire to be able to know what’s going on in your body and optimize it can get so intense that it defeats the purpose of actually knowing your body and mind. But we aren’t cyborgs (yet) :)

My partner and I talk about this same topic, specifically sleep scores with the Apple watch. Yeah, these things should be force multipliers, not second-guessers. I do sometimes go on "technology free" excursions where I wear and old Swatch and leave my phone at home.